- Blog

- Prison break season 5 episode 1 watch online putlocker

- What is kingroot for pc

- Adafruit trinket clone

- Maxmind test

- Ptc arbortext advanced print publisher

- Scr for droid font

- Materialize tables

- Move over darling movie youtube

- Hwmonitor pro 1-39 full

- Eve online mining guide 2017 espa-ol

- Cara install srs audio essentials 1-2-3-12

- Assistir greys anatomy 15

- Free download film wiro sableng 2018 full movie

- Descargar minitab 16

- Burnout 3 takedown ps3 download

- The office season 8 episode 13

- Merlin season 6 download

- Vanilla wow client 1-12-1

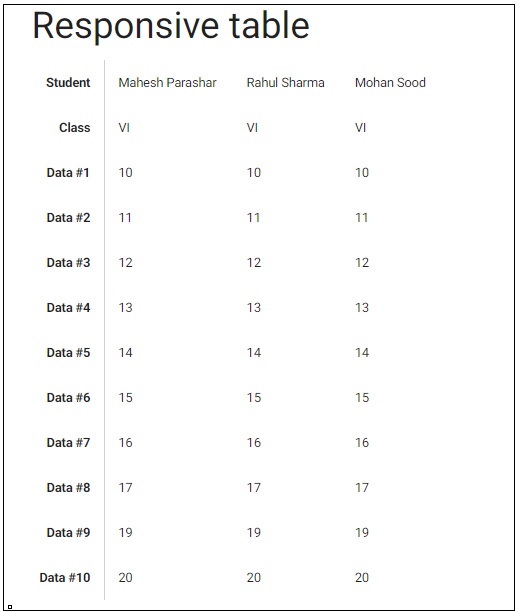

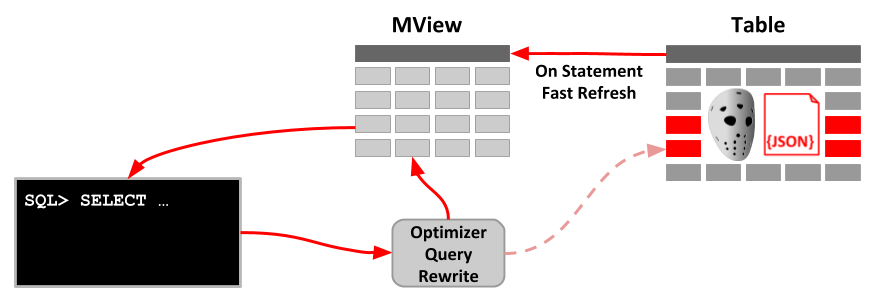

This time, we are using two different tables: Sales and Product. The same scenario happens for more complex queries, too. This optimizes the memory usage at query time and reduces the memory reads. In other words, there is no need to decompress the columns and to rebuild the original table to resolve it. The previous query runs on the compressed version of the column. Thanks to parallelism at the segment level, this query can run extremely fast on very large tables and the only memory it has to allocate is the bitmap index to count the keys.

While scanning, it keeps track of values found in a bitmap index and, at the end, it only has to count the bits that are set. Because the only column queried is ProductKey, it can scan that column only, finding all the values in the compressed structure of the column. Even if we have not yet covered the query engine (we will, in Chapter 15, “Analyzing DAX query plans” and Chapter 16, “Optimizing DAX”), you can already imagine how VertiPaq can execute this query. The result is the distinct count of product keys in the Sales table. "Result", COUNTROWS ( SUMMARIZE ( Sales, Sales ) ) In order to understand what materialization is, look at this simple query: EVALUATE Understanding when and how it happens is of paramount importance. Materialization is a step in query resolution that happens when using columnar databases. Now that you have a basic understanding of how VertiPaq stores data in memory, you need to learn what materialization is.

- Blog

- Prison break season 5 episode 1 watch online putlocker

- What is kingroot for pc

- Adafruit trinket clone

- Maxmind test

- Ptc arbortext advanced print publisher

- Scr for droid font

- Materialize tables

- Move over darling movie youtube

- Hwmonitor pro 1-39 full

- Eve online mining guide 2017 espa-ol

- Cara install srs audio essentials 1-2-3-12

- Assistir greys anatomy 15

- Free download film wiro sableng 2018 full movie

- Descargar minitab 16

- Burnout 3 takedown ps3 download

- The office season 8 episode 13

- Merlin season 6 download

- Vanilla wow client 1-12-1